Extended reality (xR) is a term that is commonly used to describe all environments and interactions that combine real and virtual elements. Whilst xR usually encompasses AR (augmented reality) and VR (virtual reality), it has a more specific meaning when used in relation to film, broadcast, and live entertainment production. In this article, we explain how, and why it’s on course to become a studio staple.

Extended Reality meaning

When used as an umbrella term, xR denotes all AR and VR technologies –it’s the overarching label given to systems that integrate virtual and real worlds. In this sense, xR can be applied equally to certain motion capture techniques, augmented reality applications, or VR gaming.

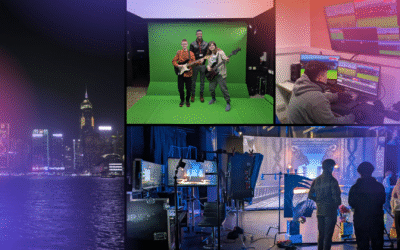

In the production world, however, it means something much more specific. xR production refers to a workflow that comprises LED screens, camera tracking systems, and powerful graphics engines.

How does xR production work?

In xR production, a pre-configured 3D virtual environment generated by the graphics engine is displayed on one (or across multiple) high-quality LED screens that form the background to live-action, real world events. When combined with a precision camera tracking system, cameras are able to move in and around the virtual environment, with the real and virtual elements seamlessly merged and locked together creating the combined illusion.

The benefits of xR production

Immersive production and real time edits

Actors, hosts, and producers can see the virtual environments whilst shooting. This means that they can adapt their performances or make edits live on set, which reduces time (and budget) spent in post-production.

Lighting

Lighting is provided by the LED screens on an xR set. This helps real-world people and objects blend seamlessly into virtual environments, and further reduces time on set adjusting lighting.

No Colour Spill or Chroma Key Compositing

On certain green screen setups, colour spill and the need for chroma key compositing can increase the time spent in post production. Neither is required for xR screens which, again, reduces any time needed in post-production.

Rapid calibration

The calibration of camera tracking systems on xR sets takes minutes rather than hours (as can happen with green screen sets). This allows for scenes to be shot across multiple sessions with minimal disruption and preparation.

Examples of xR in production

The Mandalorian

Having experimented with xR sets in the making of The Lion King (2019), producer Jon Favreau used them to complete half of all scenes for his production of Disney’s The Mandalorian (2019). With a twenty-foot tall LED screen wall that spanned 270°, Favreau’s team filmed scenes across a range of environments, from frozen planets to barren deserts and the insides of intergalactic spaceships. Apart from giving the cast and crew a photo-realistic backdrop to work against, the xR set saved a significant amount of time in production. Rather than continually changing location and studio setup, the design team could rapidly switch out props and partial sets from inside the 75-foot diameter of the volume.

Dave at the BRIT Awards

At the 2020 BRIT Awards, an xR setup was used to enhance Dave’s performance of his single ‘Black’. In a collaboration between Mo-Sys, Notch, and Disguise, a 3D animation was projected onto Dave’s piano, giving live audiences around the world an engaging visual experience. With effective camera tracking provided by Mo-Sys Startracker, camera operators could move freely around the stage with zero movement or disturbance in the piano’s moving images.

HP OMEN Challenge 2019

With the rise of eSports, developers are exploring new ways to enhance gaming and bring experiences to ever larger audiences. In 2019, HP did this by broadcasting their OMEN eSports tournament live from an xR stage. There were two main benefits of using extended reality: firstly, audiences around the world could immerse themselves in the virtual environments of the game; secondly, gamers in the studio could review their gameplay from ‘within’ the game. The end result was an interactive experience that blurred the lines between the real and virtual world.

CJP Broadcast, Partner to Mo-Sys, is a market-leading integrator of Virtual and Augmented Reality Production technology. The StarTracker Studio is a complete VR and AR studio package designed for a wide range of uses – from film and broadcasts to augmented reality corporate training. If you’re interested in learning more about how our technology can help your organisation, please get in contact.